ChatGPT Free, Doubao Paid: Two Approaches to the Same Business Challenge

An interesting, even somewhat counterintuitive contrast is occurring: overseas, ChatGPT continues to enhance its free tier to maximize user engagement, while domestically, Doubao is testing tiered subscriptions that clearly place some advanced features behind a paywall.

At almost the same time, these two leading AI products are taking opposite actions: one is lowering barriers, while the other is introducing pricing.

On the surface, this appears to be a divergence between “free vs. paid” strategies. However, at a deeper level, it represents two optimal solutions to the same business problem under different constraints. As computing power shifts from being a cost item to a scarce resource, and as conversations evolve from mere functionality to entry points, the essential question for large model products is no longer whether to commercialize, but how to integrate the goals of “controlling costs” and “expanding scale” into a sustainable model.

1. There Is No Truly Free AI

Before discussing commercial strategies, let’s return to the fundamental cost structure.

Large models are not like traditional internet products where marginal costs can be infinitely diluted. They resemble high-power machines: training requires investment, and inference also incurs costs. GPU, bandwidth, electricity, engineering, operations, and ongoing research and development budgets all consume cash flow. Every seemingly light conversation from users comes with a clear resource cost.

This leads to a harsh reality: large model services can be temporarily free, but it’s challenging to offer them for free indefinitely.

The term “free” essentially means covering costs in another way—either through stronger financial backing, clearer monetization mechanisms, or by restricting access to high-consumption scenarios.

Thus, the industry is destined to move towards stratification: basic needs should be as accessible as possible, while high-value, high-consumption, and high-frequency usage must be priced.

In this sense, ChatGPT and Doubao are not fundamentally different. Both are doing the same thing: maintaining a sufficiently large free pool to capture user attention and product entry points, while incorporating more expensive capabilities and productivity scenarios into a paid system, allowing heavy users to pay for their computational consumption.

The difference lies in their chosen monetization paths, which stem from the different external conditions of their respective markets.

2. Two Paths for Monetizing Large Models: Entry Platforms and Productivity Tools

In the overseas market, ChatGPT is more likely to position itself as an “entry platform.”

When the user base is large enough and usage frequency is high, the platform can benefit from two types of revenue: subscriptions and a commercial system built around the entry point—including but not limited to advertising, shopping guides, distribution, and even broader “transaction matching.”

Once the entry point is established, “free stronger capabilities” become not just a benefit but an offensive strategy: using a better default experience to gain a larger user pool, higher retention, and stronger brand positioning, and then letting subscription and platform revenue cover higher inference costs.

In the domestic market, Doubao faces the pressure of consolidating its “computational ledger” sooner.

The domestic environment is more sensitive to commercial integration in conversational products, and users have long been educated by a “free internet”—you can use subsidies to gain scale, but once the scale is achieved, every conversation becomes a quantifiable cost, and free quickly transforms from a growth strategy into financial pressure.

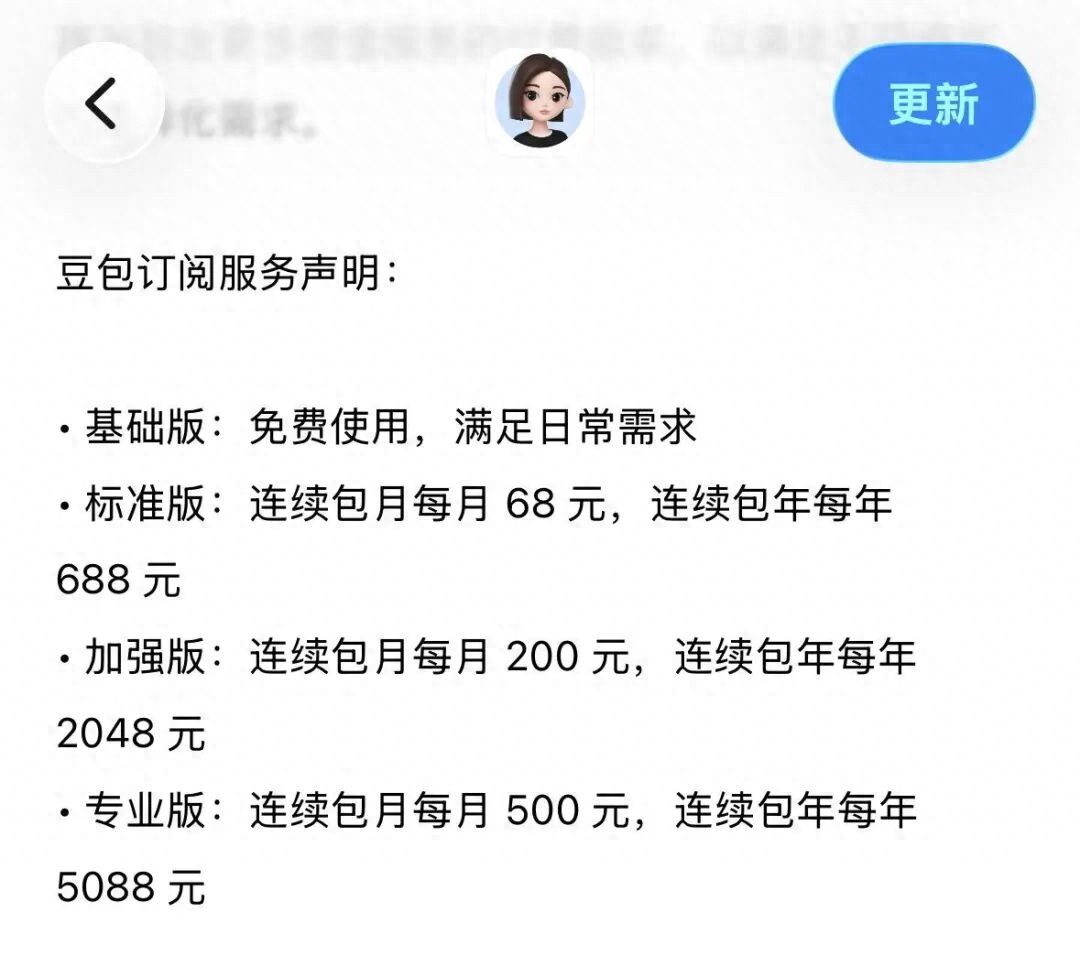

Thus, Doubao’s more reasonable choice is to set pricing on “high-consumption scenarios”: retaining a sufficiently usable free experience while using tiered subscriptions to bring high-frequency, professional, and intensive productivity needs into the paid realm, locking in cost risks before pursuing longer-chain ecological cooperation.

This also explains why Doubao’s paid features are often perceived by the market as “productivity-oriented” capabilities: for example, stronger content generation, more complex documents and presentations, data analysis, and even higher quotas and more stable responses. Their commonality is not being “cooler,” but rather “more resource-intensive.”

Doubao’s charging is not a “sudden change of face,” but rather an update to its computational allocation system: light needs are universal, while heavy needs are priced based on value.

Therefore, rather than viewing the two as “one generous and one restrained,” it is more accurate to say: ChatGPT aims to grow itself into an entry platform, while Doubao opts to quickly condense stronger capabilities into productivity tools.

One resembles a “platform logic,” while the other aligns with “SaaS logic”: the former pursues scale and network effects, while the latter seeks controllable unit costs and predictable payment conversions.

3. The Domestic Large Model Industry Enters the “Pricing Era”

As leading products begin to take pricing seriously, the impact will extend beyond themselves. Pricing will create a “benchmark effect”: it will compel other players in the industry to answer the same questions—how should you charge for the capabilities you provide? Who are the charging targets? What granularity of charging won’t drive users away?

In recent times, competition at the application layer of domestic large models has resembled a “subsidy race”: using free and low prices to gain scale and capital narratives.

However, as usage volumes reach higher levels, computational expenses shift from “tolerable investments” to “structural costs that cannot be ignored,” the industry will naturally enter a more mature and harsher phase: it will no longer be about who can burn more money, but who can realize value more effectively.

This will lead to two direct consequences.

First, the head effect will further strengthen.

Because “pricing” fundamentally relies on two factors: user familiarity and high retention. Only when enough people stay long-term in your product can you hope to get some heavy users to pay for more stable, higher quotas, and stronger capabilities.

Head players can endure higher early investments and recover costs faster once a payment system is established, thus they are better positioned to define “industry prices.”

Second, the space for mid-tier and startup companies will be squeezed.

The reason is simple: they often lack two essential elements—sufficient free traffic pools to support platform monetization and strong brand and product stickiness to support high-priced subscriptions.

Thus, their most realistic survival path will shift from “being a general assistant” to “delivering clear value through vertical products,” either by making ROI sufficiently clear in industry applications or by forming irreplaceable efficiency improvements in toolchains and workflows.

Once the pricing era arrives, the “middle layer” of general capabilities will become more challenging to navigate.

4. Will Domestic Users Be Willing to Pay for “Certainty”?

The success of pricing strategies in the large model industry hinges on a seemingly simple yet decisive variable: are domestic users willing to pay for AI?

This is not an emotional issue, but a matter of value structure.

Users are rarely willing to pay for “the ability to chat,” but they may pay for “consistent output,” “time savings,” or “guaranteed success rates.” In other words, the core of payment is not “stronger,” but rather “more certain.”

As AI transitions from chatting to productivity, from one-off answers to ongoing task execution, subscriptions become not just about “buying permissions,” but rather about buying stability, quotas, and reliable components within processes.

Doubao’s tiered subscription is, in some sense, an industry-level stress test: it is not testing whether users are willing to spend money, but whether AI can truly be embedded into daily workflows, becoming a measurable, reusable, and sustainable productivity tool.

If the test succeeds, the ceiling for domestic AI commercialization will be raised; if it fails, the industry may remain in a competition landscape dominated by “low prices and free” for a longer time, until the next form upgrade (such as more mature agents) brings new value structures and pricing rationality.

Conclusion: The Era of Large Models Burning Money Is Coming to an End

Thus, “ChatGPT free, Doubao paid” is not about right or wrong paths, nor product superiority, but rather rational business choices under different market rules:

ChatGPT tends to use free as fuel for entry expansion, hoping to cover costs with platform revenues and subscriptions; Doubao leans towards using paid as a computational allocation system, first pricing heavy demands to ensure controllable costs, and then seeking broader commercial space.

For the industry, a more important signal is that large models are bidding farewell to the narrative of “barbaric growth” and entering the stage of “realizing commercial value.”

Free is no longer the default option, and low prices are no longer inherently just. The pricing mechanism is beginning to take over the scarcity of computational power, meaning that models, clouds, hardware, and application layers will all be forced to reinterpret themselves using more realistic unit economics.

For ordinary users, the conclusion is quite simple: basic AI needs will likely remain free for the long term; what truly requires consideration for payment are those high-frequency, professional uses with clear demands for efficiency and stability.

You need not worry about “charging,” nor be overly excited about “free”—in the pricing era, simply choose according to your needs and spend on capabilities that can significantly enhance your output.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.